VictoriaMetrics vs. OpenTSDB

I’ve written a number of times recently about TSDBs at $JOB. For the last few years, we’ve been using OpenTSDB. It’s been…a mixed bag (#1, #2).

Over the last few months we’ve been exploring other options for TSDBs (as I mentioned previously). We landed on VictoriaMetrics. It provided the majority of the features we needed (excluding data rebalancing in a cluster, which we may be able to mitigate), allowed for handling vastly higher cardinality (2 million+ series on a metric is apparently okay, where OpenTSDB starts to fall down at ~10k series), is generally more performant than OpenTSDB (details below), is far simpler to deploy (no hadoop ecosystem), has an active and responsive community, and…the list could go on and on.

Let’s get into how much happier we are with VictoriaMetrics…

Current Deployment

Currently we have 30 physical nodes (including ingest) for Hadoop/HBase/OpenTSDB/Rollup jobs. On average the hosts have 32 cores x 128 GB RAM a piece (a quick bit of math says that’s 3840 GB of RAM and 960 cores used for this system). Importantly, this is dedicated hardware taking up space in a datacenter, too. 2U machines * 30 instances means this install takes up over an entire standard DC rack by itself. And while the underlying hadoop install does perform other functions, OpenTSDB is its primary use case. If we assume that 75% of the processing on hosts goes to OpenTSDB (which is somewhat reasonable, shutting off OpenTSDB shows load on hosts drop by ~75-80%), we’re using 2880 GB of RAM and 720 CPU cores just for OpenTSDB.

What about storage? HBase (the underlying store for OpenTSDB) is currently using ~61TB of space. In that, we are storing 3 months of “raw” data (data stored at exactly the rate it was initially submitted) and ~13 months of data stored at a 10 minute “rollup” window (which in some cases is 1/10th the size, and in others may actually be taking up more space).

New VictoriaMetrics Deployment

Our initial install of VictoriaMetrics has 36 nodes (including ingest):

- The storage layer (

vmstorage) instances- 8 cores x16 GB RAM

- 4 TB HDD-speed (spinner) ceph volume

- 28 hosts

- The “access” tier (hosts that share

vmselectandvminsertservices):- 4 cores x 4 GB RAM

- 9 hosts

- The “insert” hosts

- 16 cores x 16 GB RAM (will likely reduce this)

- 4 hosts

Again some quick math: That’s 324 cores and 548 GB RAM. Put more succinctly, that’s 19% of the RAM and 45% of the CPU cores.

As for storage, while we haven’t completed our data migration from the old system to the new, ~1 month of the same ingest data in VictoriaMetrics fits in ~4.5TB of space. This implies our intended 1 year of raw data should fit in ~54TB of space. Which is rather impressive (thanks, zstd). 3 months of raw data plus 1 year of data, aggregated at 10 minutes, in OpenTSDB takes up ~63TB of space. So that’s ~15% reduction in storage space for more accurate data. Wild.

Performance Comparison

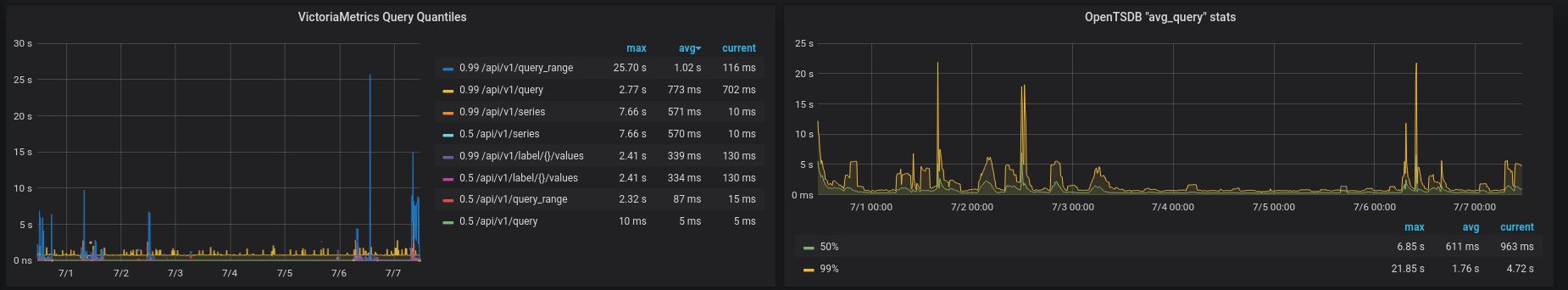

This dashboard shows the performance difference in queries between VictoriaMetrics and OpenTSDB:

If we look carefully, we can see the 99% calculations seem to somewhat match up, while the average query performance in VictoriaMetrics is vastly better. Some of this may come down to good caching in VictoriaMetrics.

(The primary issue with this graph is we’re comparing apples to oranges. VictoriaMetrics produces nice histogram calculations around query performance. OpenTSDB produces a series of metrics with names like tsd_avg_query_maxhbasetime. The naming doesn’t make what they’re actually measuring entirely clear.)

Beyond basic resource calculation…

Importantly, there’s more to this migration than simply using fewer resources:

PromQL vs. OpenTSDB (and gexp)

VictoriaMetrics supports the PromQL query language (and extends it with its own MetricsQL additions). OpenTSDB provides a custom query language that, while functional, really cannot hold a candle to PromQL.

Let’s have an example or two, shall we?

Simple queries

Let’s imagine a metic like this:

{ "metric": "disk_used_percent",

"tags": {

"host": "host1",

"path": "/"

},

"value": 37.3

}

{ "metric": "disk_used_percent",

"tags": {

"host": "host1",

"path": "/data"

},

"value": 10.2

}

So…two series (splitting on the path tag). Simple. We can easily imagine this metric expanding as our number of unique hosts sumbitting data grows as well.

How would we retrieve this data from VictoriaMetrics? And how would we retrieve it from OpenTSDB?

OpenTSDB

OpenTSDB automatically aggregates any tags that aren’t explicitly mentioned, so we need to reference the path tag to ensure we see both series correctly:

/api/query?start=15m-ago&m=sum:disk_used_percent{path=*}

This query will return two series for the last 15 minutes of data.

If we had left out path we would only receive one series with the two values aggregated together (by a sum) like so:

{ "metric": "disk_used_percent",

"tags": {

"host": "host1",

},

"value": 37.5

}

For new users, this…“ecentricity” of OpenTSDB’s query style can be very confusing.

VictoriaMetrics

VictoriaMetrics (and all PromQL-compatible query languages) automatically expand all tags unless we specifically aggregate them. To display all series of our metric for the last 15 minutes, we could perform this query:

/api/v1/query?query=disk_used_percent

If we wanted to aggregate by host, we could (although aggregating disk usage percent by host is a strange choice) using the following:

/api/v1/query?query=sum(disk_used_percent) by (host)

In my opinion, this is a much more transparent way to display this data.

Complex Queries

OpenTSDB

It’s difficult to effectively describe the exp and gexp endpoints in OpenTSDB. Their documentation is…verbose. Generally speaking, we can conceptualize the exp and gexp endpoints in OpenTSDB as being able to handle more complex queries, but unfortunately, systems like Grafana don’t support them.

VictoriaMetrics

Complex queries in PromQL are logical extentions of simple queries. If we wanted to use our example metric from above and find any disks where usage is > 90%, we could simply say:

/api/v1/query -d 'query=disk_used_percent > 90'

Similarly if we imagine a metric about ntp drift (let’s call it ntp_offset) that stores the actual offset in seconds, we could easially discover hosts with drifts greater than 60 seconds with the following query:

/api/v1/query -d 'query=sum(abs(ntp_offset)) by (host) > 60'

The performance

OpenTSDB

OpenTSDB isn’t, by any means, slow. You can request a rather large amount of data very quickly. But it also isn’t very fast. Largely due to architectural limitations within HBase itself, OpenTSDB particuarly starts to struggle as the requested data becomes more complex.

- If you have a high cardinality metric, OpenTSDB requires much larger amounts of processing time to retrieve it.

- Windowing of data (only requesting 1 sample per X time range) is either performed with pre-calculated aggregates or done after all query data is retrieved (requiring large amounts of memory, network traffic, and processing to complete - often causing instability in the cluster with larger queries).

VictoriaMetrics

VictoriaMetrics is fast. Below is a pretty graph, showing our 99th percentiles for queries in VictoriaMetrics over the last 2 days:

We can see that only rarely do we get queries that take longer than a few seconds, and only once did we get a query tha would have felt slow to a user (one 10 second long query).

We can see that only rarely do we get queries that take longer than a few seconds, and only once did we get a query tha would have felt slow to a user (one 10 second long query).

- While cardinality affects all databases, VictoriaMetrics is able to significantly reduce the imapct through clever query optimizations (these are mostly similar optimizations to what PromQL does, so the improvements happen there as well).

- Windowing of data occurs automatically, meaning there’s less need for pre-calculated aggregates or additional instances with “coarser” data to help improve query performance.

The communities

The OpenTSDB community is…hurting. I laid this out more in another post, so there’s no reason to re-hash it. Suffice to say, it’s hard to get help with OpenTSDB.

The VictoriaMetrics community is active and quite helpful. In the process of getting our systems set up, I’ve submitted bugs and code upstream and typically received helpful responses quickly. For example:

- https://github.com/VictoriaMetrics/VictoriaMetrics/pull/1089 and https://github.com/VictoriaMetrics/VictoriaMetrics/pull/1198

- these two MRs represent a OpenTSDB -> VictoriaMetrics migration tool. Thanks to devs, I was able to give the community a functional tool to help others in a similar situation get out!

- https://github.com/VictoriaMetrics/VictoriaMetrics/issues/996

- learning about how different endpoints function (with significant detail in the responses)

Conclusion

We’re quite excited about VictoriaMetrics. It’s made building useful visualizations, alerts, and general tooling much easier (and more in line with where the industry is headed, which is also nice).

Addendum

One critically important part of migrating users from one data source to another is moving their existing data. When we started evaluating VictoriaMetrics, there wasn’t a migration tool between OpenTSDB and VictoriaMetrics, so I wrote one: https://github.com/VictoriaMetrics/VictoriaMetrics/tree/master/app/vmctl/opentsdb.

Being able to leverage the existing migration patterns the VictoriaMetrics team had already provided made writing the tool relatively painless. The primary goal was to address some of the problems I described with OpenTSDB queries above. So individual queries are performed over short time ranges and then written in batches to VictoriaMetrics. It keeps the query load on OpenTSDB low and ensures restarts are at least possible.

More documentation on the migration tool is here: https://github.com/VictoriaMetrics/VictoriaMetrics/tree/master/app/vmctl#migrating-data-from-opentsdb